What Happens When Efficiency Becomes Oppression?

The Lessons of the Zigeunerzentrale, the Zigeunerbuch and the Risks of AI-Enabled Targeting

👉 History offers chilling lessons. The line runs from the Zigeunerzentrale to modern AI-driven surveillance. Every era picks a virtue and worships it. Ours is efficiency.

Efficiency has no moral sense. It speeds up whatever you point it at. It doesn’t ask why. It doesn’t ask whether. It asks how fast, and how far. “Can AI optimize?” is the easy part. The harder question is when optimization quietly becomes control. What do you think? Share your thoughts in the comments! ⬇

If history taught us anything, it’s that harm doesn’t usually arrive as “new tech = evil”.

What shows up again and again is classification, then routine administration, then enforcement. Moral responsibility disappears into paperwork.

Speed isn’t the core danger here. Losing judgment is.

Judgment is the human capacity to pause, to weigh context, to say “this rule should not apply here”. Bureaucracies don’t need cruelty to do harm. They just need rules that won’t bend.

Sociologically, this is about how states govern through legibility. Once people are rendered sortable, comparable, and trackable, governance shifts from judgment to procedure. At that point, power no longer needs intent. It only needs compliance.

The Third Reich intensified the persecution. It didn’t invent it, and it didn’t end it. For centuries, they’ve been scapegoats: marginalized and systematically targeted under laws meant to “civilize” but designed to exclude.

The Zigeunerbuch was prejudice built into infrastructure.

Infrastructure keeps running without hatred. It runs on categories that outlive the people who created them. When a state turns identity into a searchable record, “efficiency” stops being neutral. AI won’t invent oppression from scratch. But it can accelerate it, standardize it, and disguise it as routine administration.

AI changes the game by changing what can be inferred.

Speed is the obvious change. Inference is the dangerous one.

Weber had a phrase for this: rationalization without values. Efficiency with no anchor.

Old systems mostly recorded what officials already believed.

AI can predict, infer, and rank. Then the ranking quietly becomes policy.

What begins as a recommendation quietly hardens into a norm.

And norms rarely announce themselves as moral choices.

Terminology note: “Sinti” and “Roma” refer to distinct communities within the broader Romani peoples. In German-speaking contexts, “Sinti und Roma” is often used to respect that distinction while acknowledging a shared history of persecution. (Links and longer references are at the end.)

📘Optional Reading:

📌Sinti: The Sinti are a subgroup of the Romani people, primarily located in Central Europe, including Germany, Austria, and parts of Italy, France, and the Netherlands. The Sinti have a distinct cultural and linguistic identity, often using their own dialect of Romani, influenced by the languages of the regions where they live. Historically, they have faced severe discrimination and were targeted for persecution during the Holocaust.

📌Roma: The Roma are the larger and more diverse group within the Romani people, spread across Europe and other parts of the world. They are culturally and linguistically diverse, with many subgroups defined by their geographical and historical roots. Roma communities have also been subjected to systemic discrimination and persecution, including during the Holocaust, where many were victims of genocide alongside the Sinti.

By the late 19th century, this prejudice solidified into institutionalized oppression, culminating in centralized surveillance systems like Germany’s Zigeunerzentrale, a “Gypsy Central Office” founded in Munich in 1899 to systematically monitor and control Sinti and Roma communities, which became a bureaucratic precursor to the horrors of the Holocaust. That date matters. It shows the logic of modern governance at work before the Nazis, the state’s impulse to turn “social problems” into files.

What’s most chilling is how its legacy lingered: post-war police forces reused its data, carrying a thread of discrimination through supposedly fresh democratic systems.

You can write these systems off as relics. That’s the temptation. Then the questions show up anyway.

What if the tools of oppression were armed with the power and efficiency of today’s technology, especially AI?

Would AI amplify discrimination, or could we use it to dismantle these systems instead?

History doesn’t simply echo; it haunts. And the story of the Zigeunerzentrale reminds us how easily administrative tools, meant for order, can metastasize into mechanisms of harm and cruelty.

This post includes deeply personal content about my ancestors and their suffering, which tragically represents just one aspect of the broader atrocities my family endured during the Third Reich.

I’m using this example because it hits close to home, and because it points to a broader truth: there are countless similar examples of how systemic oppression has been fueled by bureaucracy and technology throughout history. Each one serves as a vital lesson for ensuring such tools are never misused again.

Philosophy insists on this move: from the singular case to the general warning, without losing the human face along the way.

📌 Terms like "Zigeuner," which is what Romani people have traditionally been called in German, are deeply offensive and rooted in prejudice. They are used here solely to reflect historical terminology for accuracy and to confront the injustices of the past. Using the common English slur “gypped”, derived from “Gypsy”, for example, might seem harmless, but it comes from the stereotype that Romani people are swindlers. That narrative fueled centuries of exclusion and persecution.

Words like that shape how people think.

📌 The Nazis specifically targeted Sinti and Roma, which are two distinct groups within the broader Romani people. While "Romani people" is a broader, politically correct, and respectful term encompassing many groups across Europe, including those not specifically targeted by the Nazis, Sinti and Roma were the primary targets of Nazi policies of persecution and genocide.

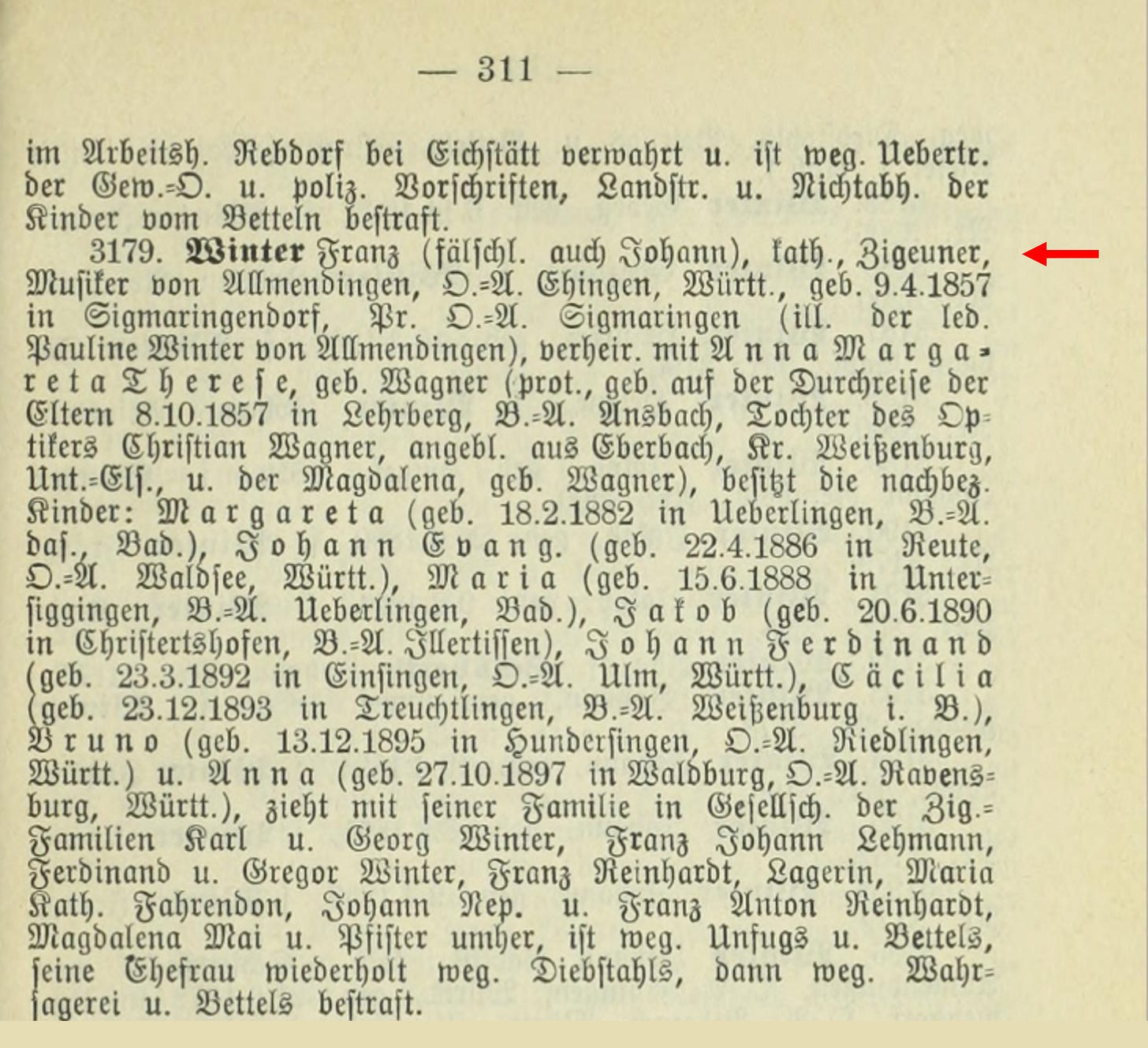

Zigeunerzentrale and Zigeunerbuch: Systematic Oppression Through Data

The Zigeunerzentrale (which is short for Nachrichtendienst für die Sicherheitspolizei in Bezug auf Zigeuner and means Intelligence Service for the Security Police Regarding Gypsies) began as a regional initiative and evolved into the Reichszentrale zur Bekämpfung des Zigeunerunwesens (Reich Central Office for the Combatting of the Gypsy Nuisance), a national instrument of persecution under the Nazi regime. Its mission was clear: to use modern surveillance and centralized data collection to categorize, monitor, and ultimately oppress an entire group of people.

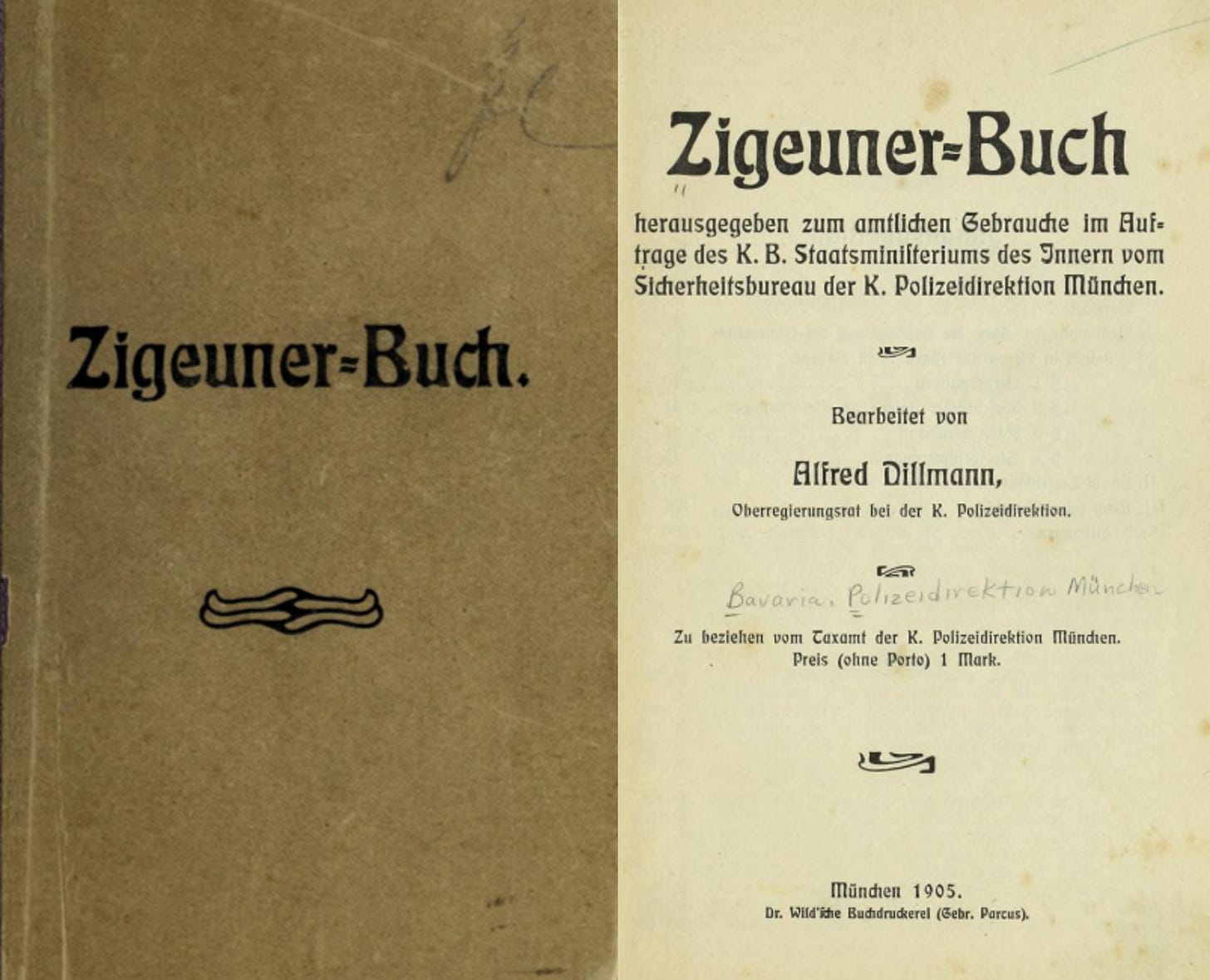

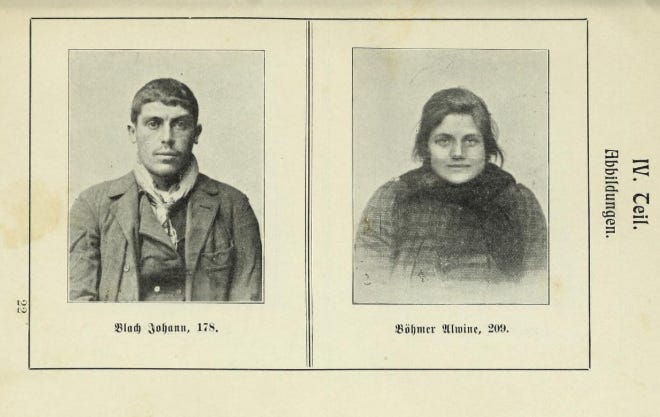

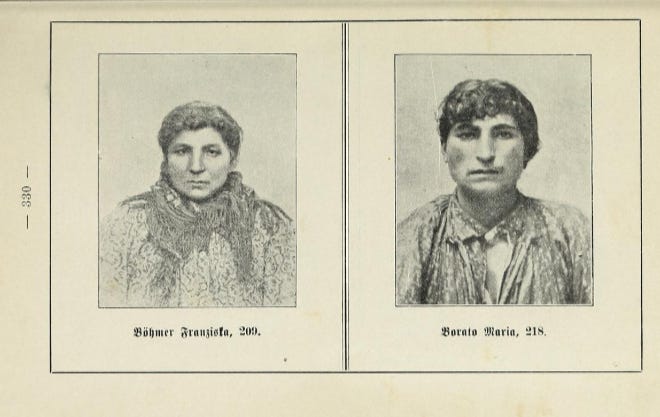

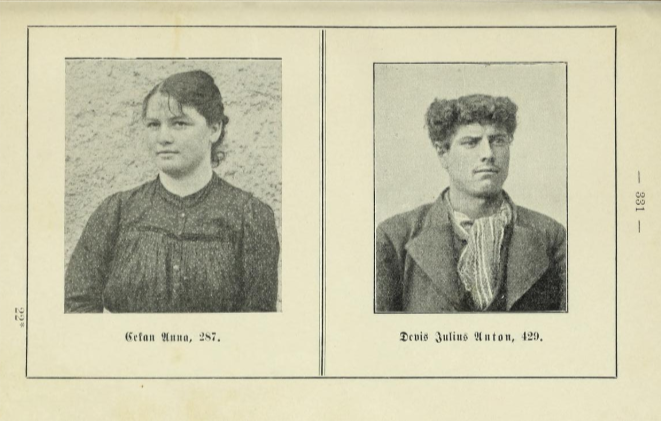

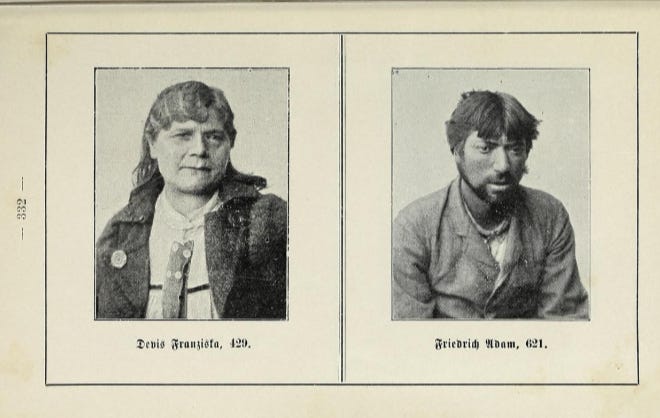

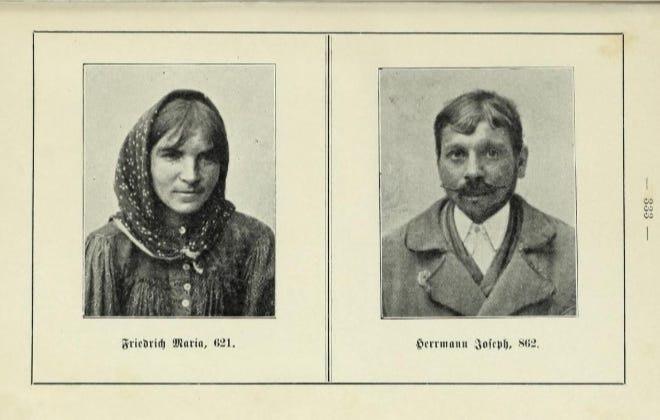

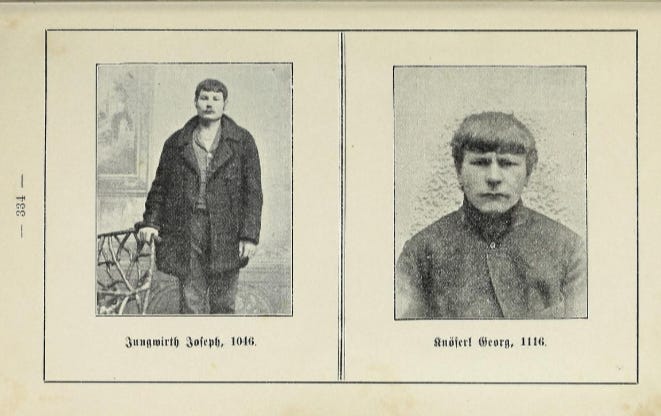

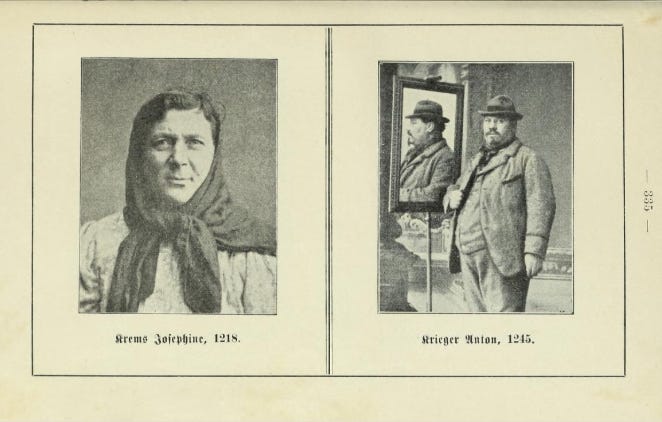

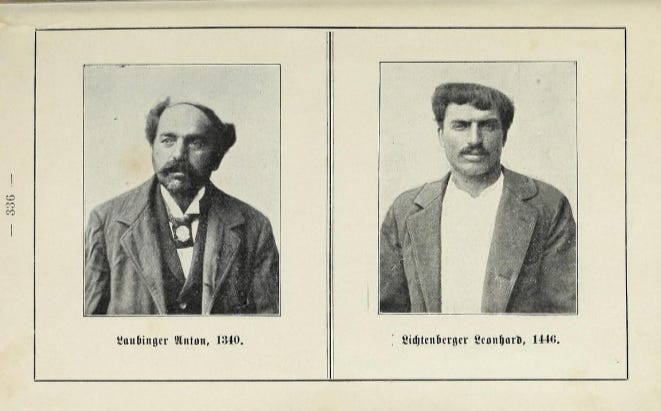

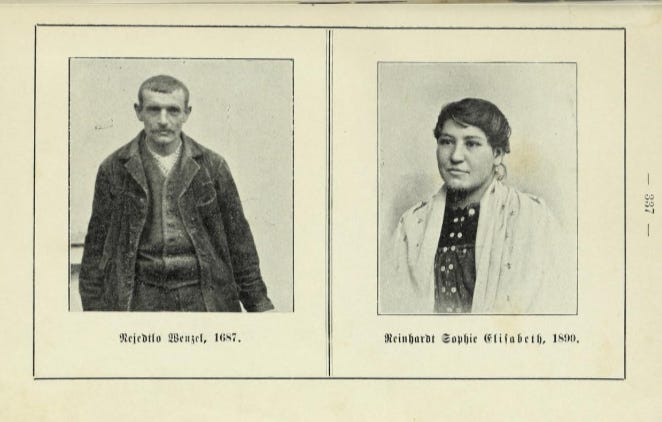

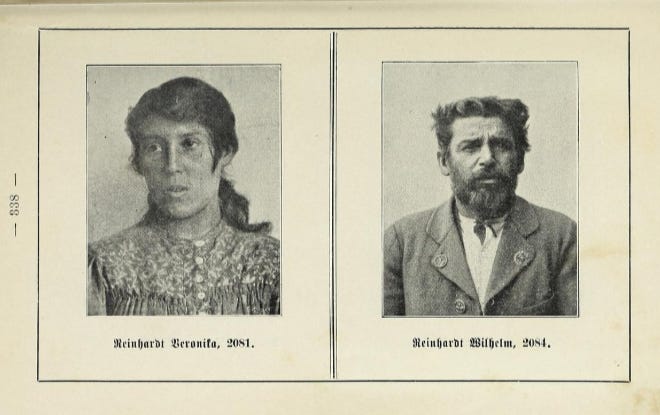

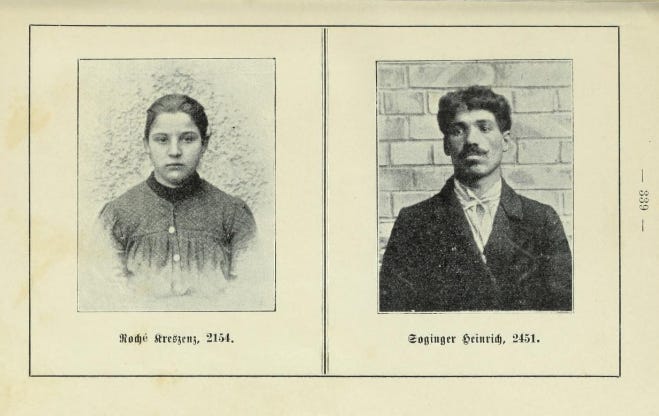

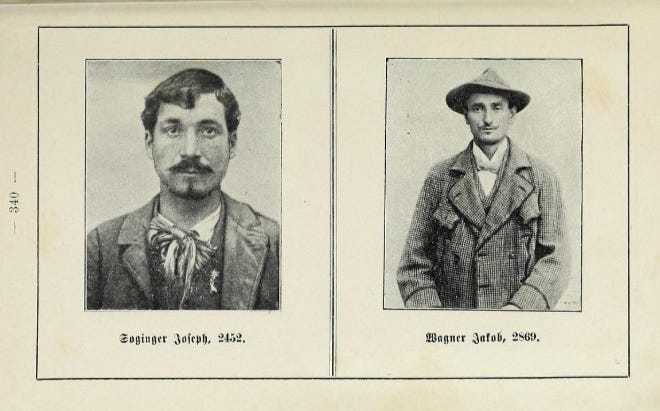

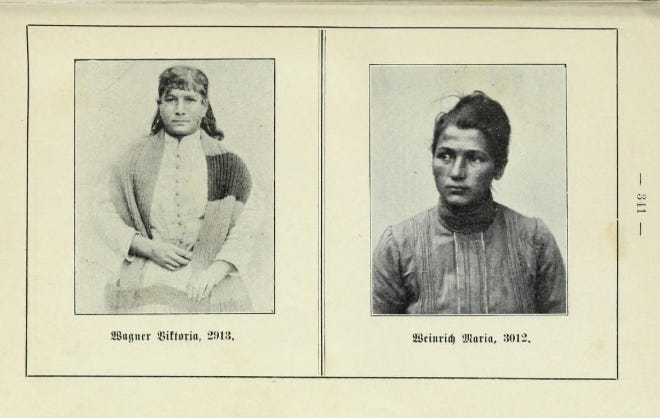

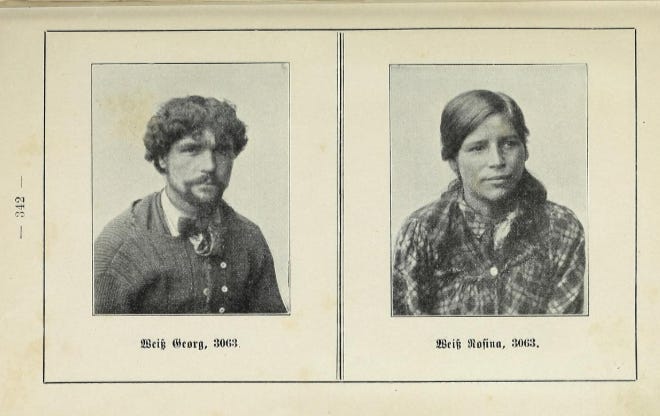

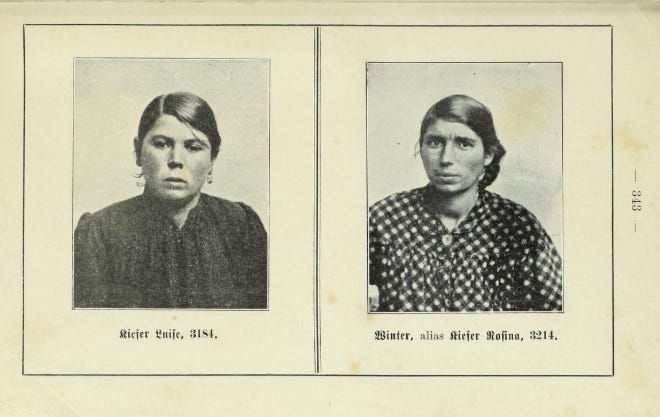

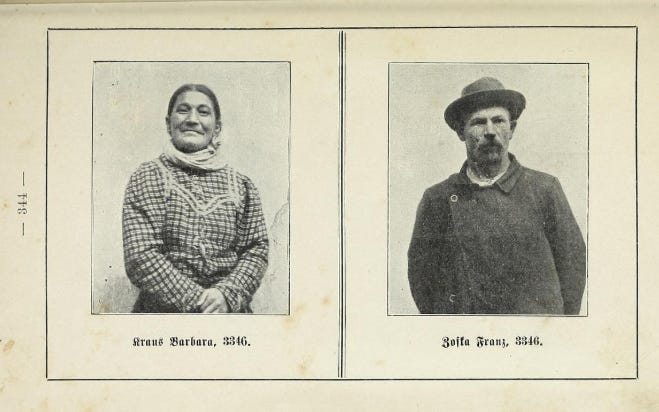

One of its most infamous products was the Zigeunerbuch, a comprehensive catalog created in 1905. This document contained personal details, photographs, and genealogical information on over 3,350 individuals. Distributed to thousands of authorities across Germany, the Zigeunerbuch became a weapon of systemic discrimination, stigmatizing Sinti and Roma as criminals and outsiders. It laid the groundwork for more centralized systems later employed by the Nazis, facilitating mass deportations and the Porajmos - the genocide of the Roma people during the Holocaust.

📌 Terminology note: “Porajmos” is a term used (especially in academic and activist contexts) for the Nazi genocide of Roma and Sinti. It isn’t universally used within Romani communities. No one in my family used it. I’m including it here because readers may encounter it in the literature.

Starting here, I’m including photos of 32 people whose images appear across 16 pages of the Zigeunerbuch. I’m doing this for one reason: to make it harder to stay abstract. These were human beings with families, ordinary lives, and names that ended up in the wrong system.

A Personal Connection – The Zigeunerbuch and My Family

For me, the Zigeunerbuch isn’t an abstract example. It’s family history.

The Zigeunerbuch is more than a historical artifact to me; it is a deeply personal and painful reminder of how bureaucracy can be weaponized to dehumanize and destroy.

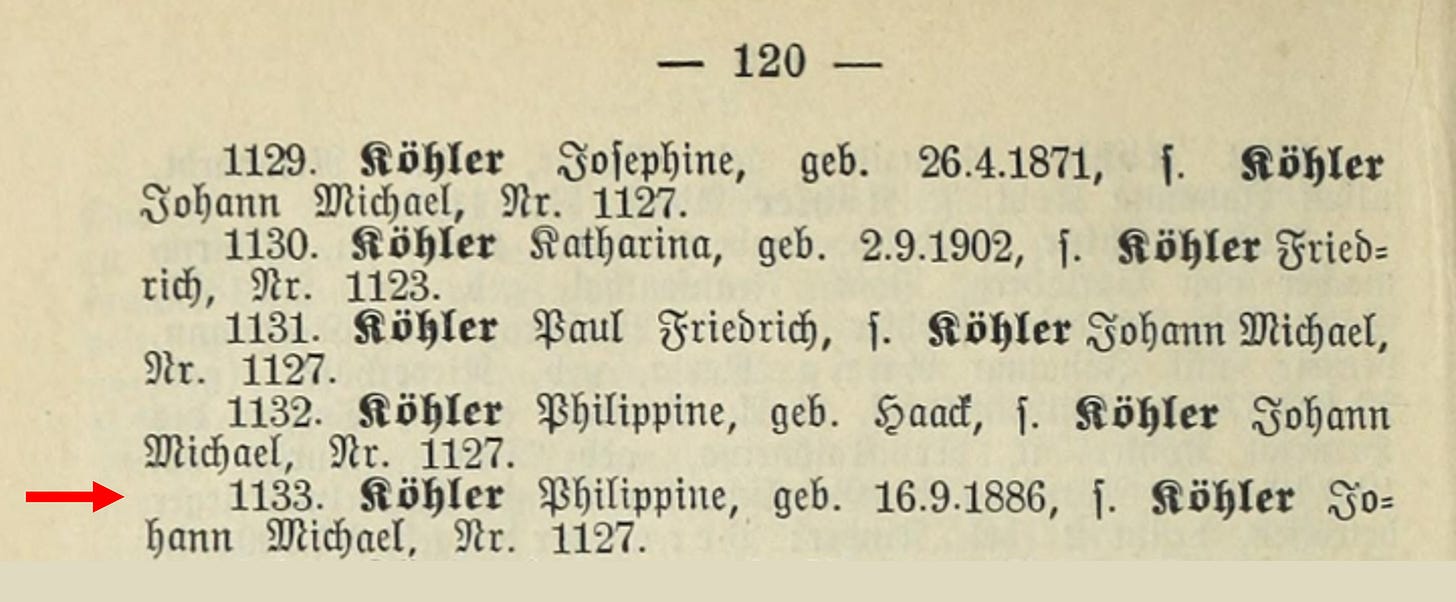

Both my great-grandparents and great-great-grandparents on my father’s side were listed in the Zigeunerbuch, a catalog that reduced human lives to entries in a ledger.

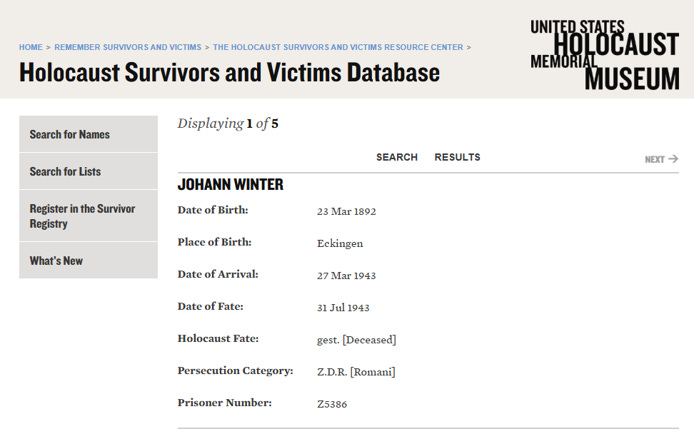

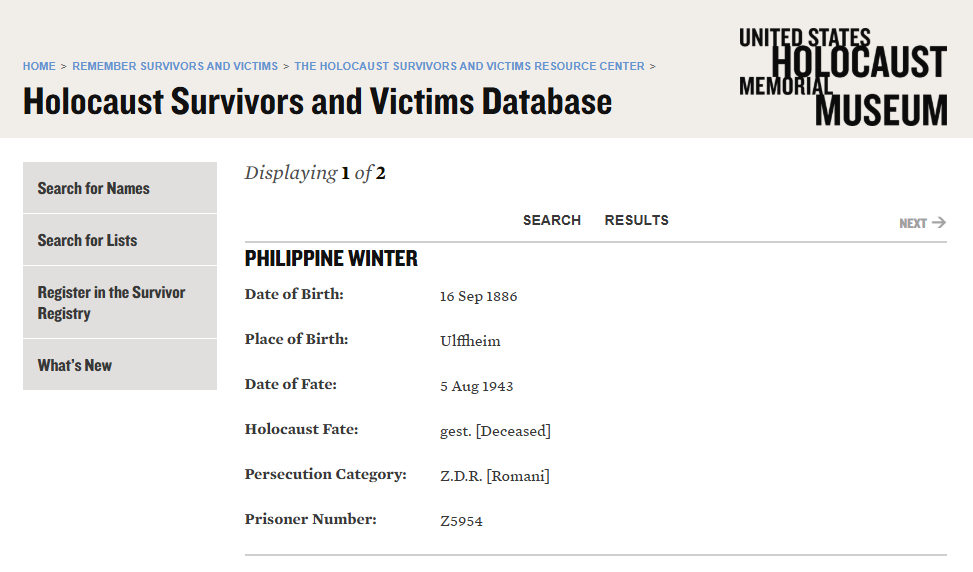

For my great-grandparents, Johann and Philippine Winter, their inclusion in the Zigeunerbuch marked the beginning of the end.

✝️ Both were deported to Auschwitz, where they were murdered in the summer of 1943, at the ages of 51 and 56.

✝️ Their youngest son was also killed there.

Their prisoner numbers were “Z5386“ and “Z5954“ – the letter "Z" stands for “Zigeuner” which is German for "Gypsy".

At Auschwitz, uniquely within the camp system, tattooing became part of identification, and by the spring of 1942 the SS ordered prisoner numbers tattooed on the left forearm. I can’t confirm whether they were tattooed. I can confirm their prisoner numbers, and that those numbers were used to mark and track them, including on clothing. What is certain is that their clothes bore these numbers, along with a brown triangle made of fabric sewn onto their jackets and trousers, identifying them as “Zigeuner”. Roma and Sinti were often classified as ‘asocial’ and marked accordingly (commonly black; in some camps, brown).

My grandfather, Anton Winter, one of their surviving children, was "lucky" because he was serving in the German army before the Nazis discovered he was a Sinto, he was “only” forcibly sterilized.

📌 Sinti are a subgroup of the Romani people in Central Europe, with specific terms used to distinguish male and female members:

📌 Sinto: A Sinto (plural: Sinti) refers to a male member of the Sinti, a subgroup of the Romani people who primarily live in Central Europe, including Germany, Austria, and the Netherlands. The term is used to denote cultural and ethnic identity within the broader Romani community.

📌 Sintezza: A Sintezza is the feminine equivalent, referring to a female member of the Sinti community.

Now, I’m going to share a glimpse into my father’s side of the family and what I’ve uncovered in the Zigeunerbuch about my great-grandparents and great-great-grandparents.

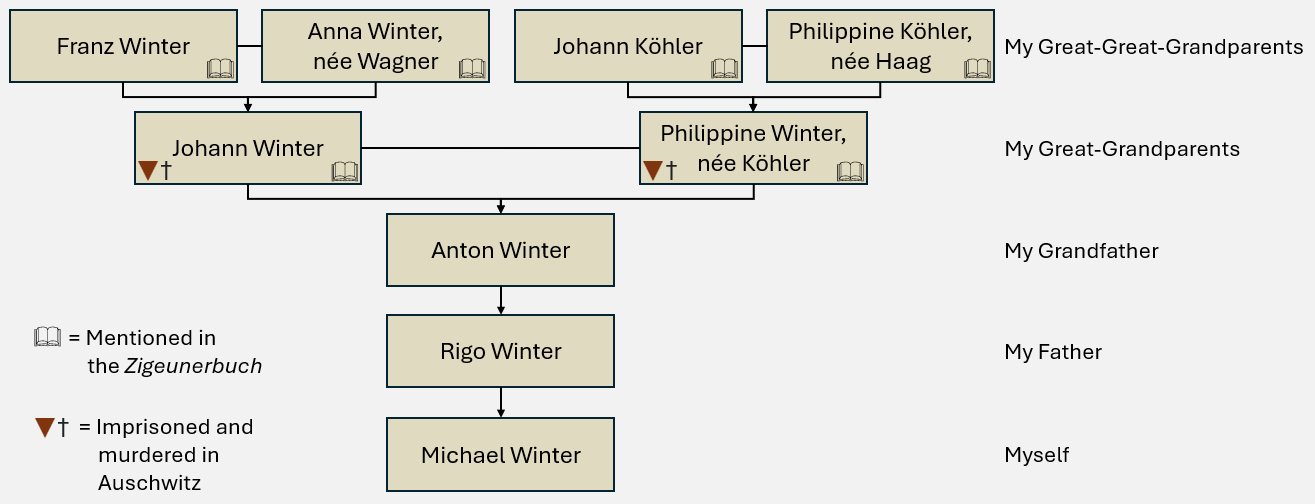

Above is a portion of my family tree, focusing on my father’s lineage - specifically, his paternal side. All six of his paternal grandparents and great-grandparents were listed in the Zigeunerbuch, and both of his paternal grandparents were imprisoned and murdered in Auschwitz.

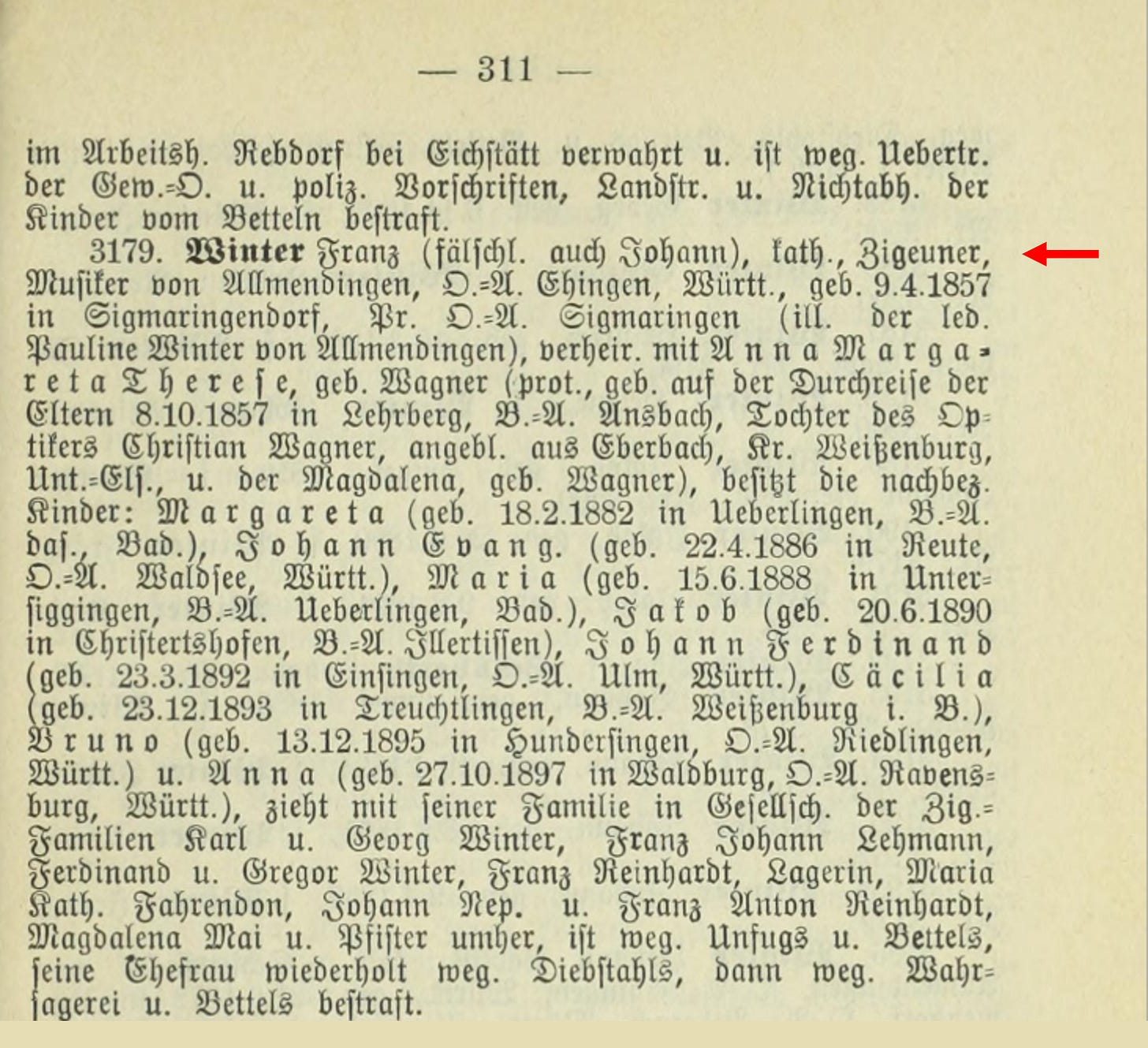

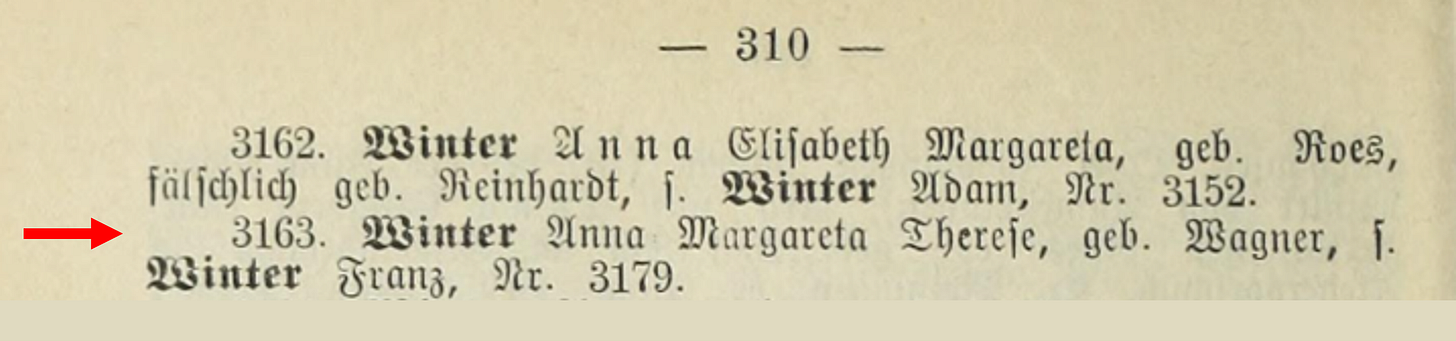

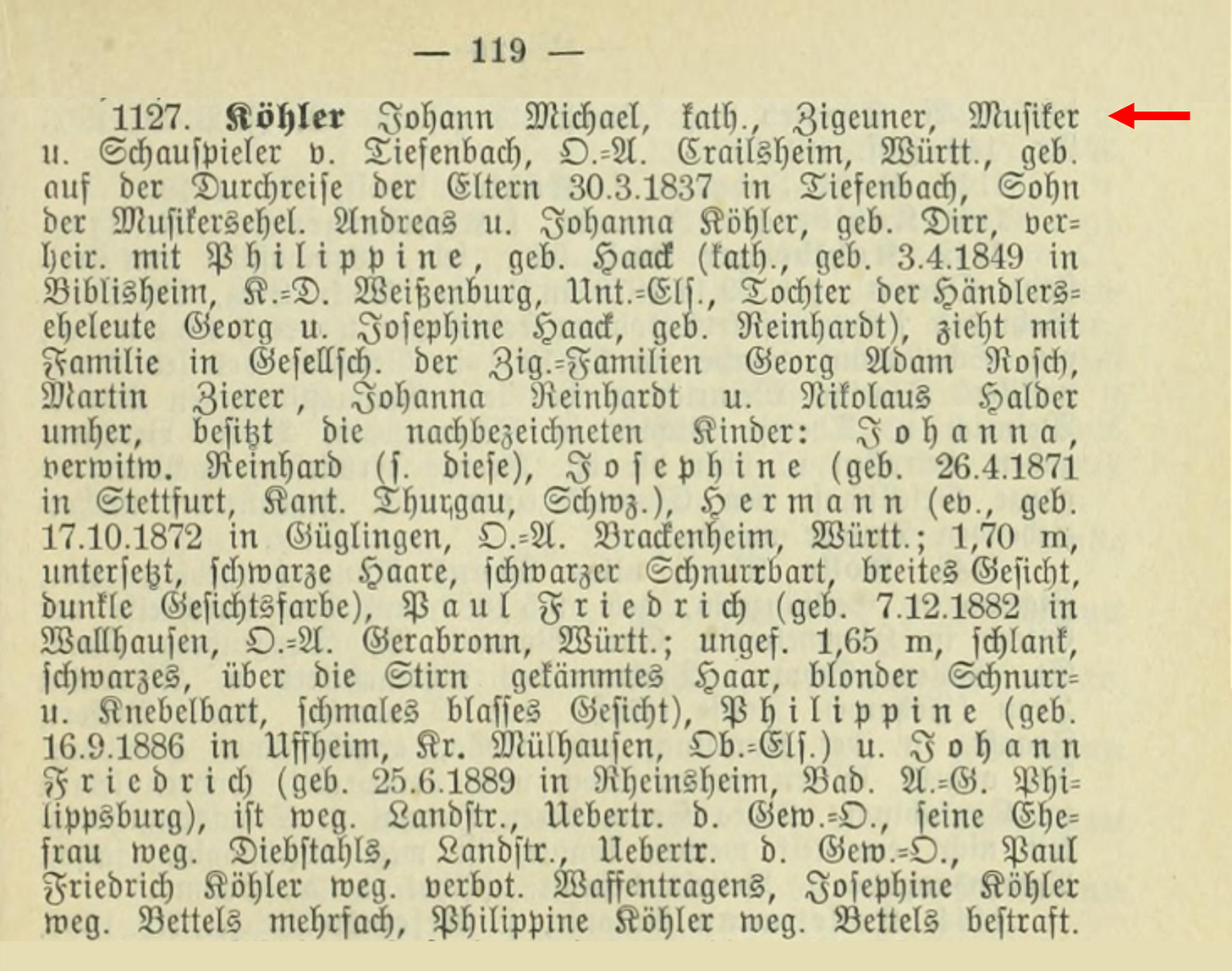

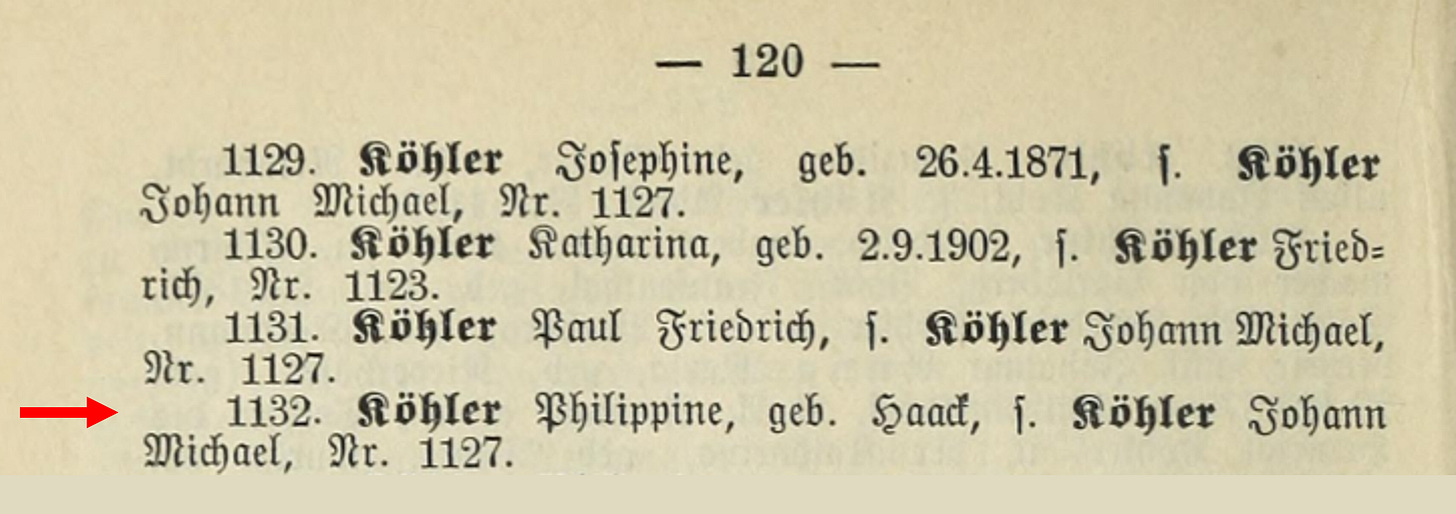

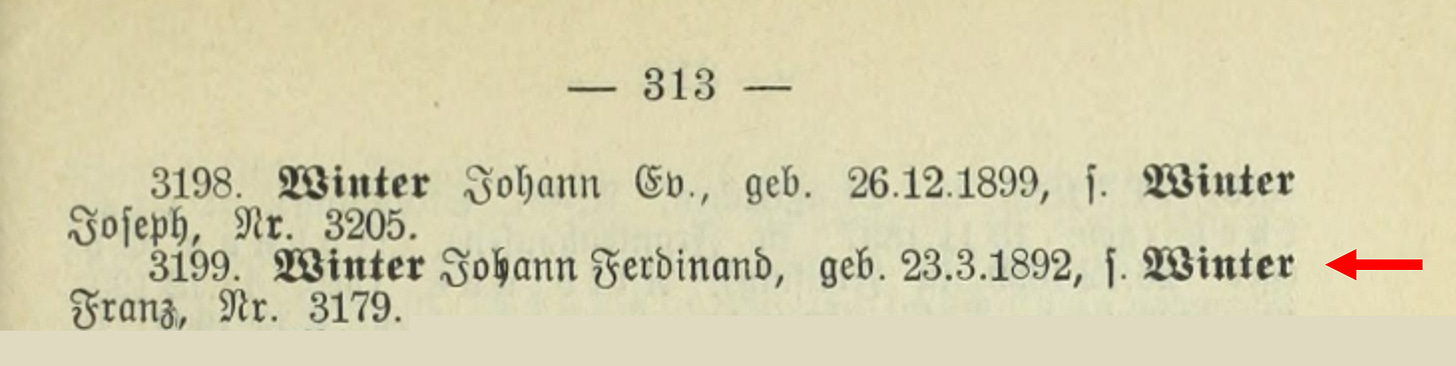

I’m going to translate this for you, as it is not only in German but also very difficult to decipher due to the old font type called 'Fraktur.' Fraktur is a type of blackletter script that was commonly used in German documents, books, and official records until the mid-20th century. It was widely used in historical texts, including the Zigeunerbuch, making it a distinctive and recognizable style associated with older German typography.

Winter, Franz (falsely also Johann), Catholic, "Gypsy," musician from Allmendingen, District Office Ehingen, Württemberg, born September 4, 1857, in Sigmaringendorf, District Office Sigmaringen (illegitimate son of the unmarried Pauline Winter from Allmendingen), married to Anna Margareta Therese, née Wagner (Protestant, born while her parents were traveling on October 8, 1857, in Lehrberg, District Office Ansbach, daughter of optician Christian Wagner, reportedly from Eberbach, District Weissenburg, Unterfranken, and Magdalena, née Wagner).

He has the following children:

Margareta (born February 18, 1882, in Überlingen, District Office Überlingen, Baden),

Johann Evangelist (born April 22, 1886, in Reute, District Office Waldsee, Württemberg),

Maria (born June 15, 1888, in Untersiggingen, District Office Überlingen, Baden),

Jakob (born June 20, 1890, in Christertshofen, District Office Illertissen, Württemberg),

Johann Ferdinand (born March 23, 1892, in Einsingen, District Office Ulm, Württemberg),

Cäcilia (born December 23, 1893, in Treuchtlingen, District Office Weißenburg, Bavaria),

Bruno (born December 13, 1895, in Hundersingen, District Office Riedlingen, Württemberg), and

Anna (born October 27, 1897, in Waldburg, District Office Ravensburg, Württemberg).

Franz Winter moves with his family in the company of other "Gypsy” families: Karl and Georg Winter, Franz Johann Lehmann, Ferdinand und Gregor Winter, Franz Reinhardt, Lagerin, Maria Rath. Fahrendon, Johann Nep. and Franz Anton Reinhardt, Magdalena Mai und Pfister.

He has been punished for disorderly conduct* and begging. His wife has repeatedly been punished for theft, fortune-telling, and begging.

* The German term "Unfug" translates to "nonsense", "mischief", or "misconduct" in English, depending on the context. In the historical and legal context of the text, it likely refers to “disorderly conduct” or ”improper behavior”, actions considered disruptive or inappropriate by the authorities at the time.

This is a remarkably detailed description of my great-great-grandfather, Franz Winter, and it reflects nearly all of the popular prejudices against Romani people at the time. It labels him as a "Gypsy" and highlights his family’s mobility, their association with other Romani families, and their professions, such as music and fortune-telling, while criminalizing behaviors like begging and "disorderly conduct". The inclusion of these accusations, often grounded in stereotypes rather than actual offenses, paints a picture of systemic bias and marginalization that Romani communities, including my ancestors, faced in historical records like this.

Reading this entry is nauseating. It’s not just recordkeeping. It’s prejudice turned into an official voice, complete with the usual stereotypes: criminality, “mischief”, begging, mobility treated as a threat.

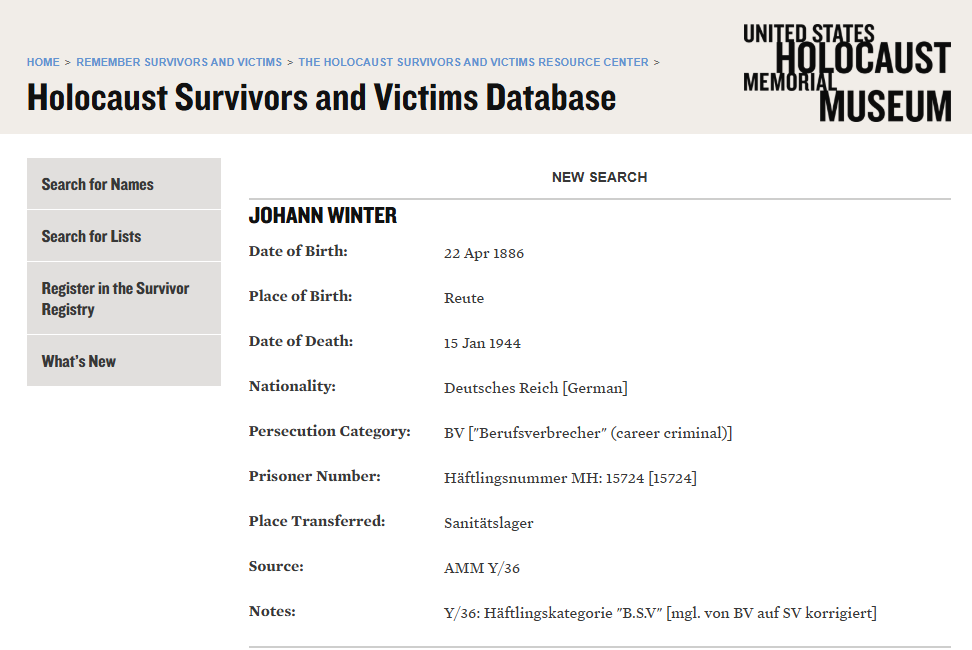

By the way, among the children of my great-great-grandfather Franz Winter listed above, it wasn’t only my great-grandfather Johann Ferdinand who was murdered by the Nazis. One of his other sons, Johann Evangelist, was also killed by the Nazis. Declared a "career criminal," he died on January 15, 1944, at the age of 57 in the "medical camp" of Mauthausen concentration camp. His prisoner number was “MH15724.” In historical contexts, especially during the Nazi era, the term “medical camp” was often used euphemistically for facilities that were part of the concentration camp system. Despite the name implying medical care, these camps frequently lacked proper medical treatment and were instead sites of inhumane conditions and atrocities. The exact meaning can depend on the specific historical or contextual usage. His prisoner category was “BSV” which could indicate that he had “BV” which was later changed to “SV” or it could be a combination of both. In this context, BV stood for “Berufsverbrecher” which is German for career “criminal” and SV likely stood for "Sicherungsverwahrung", which translates to "preventive detention." It was a category used by the Nazi regime to classify individuals who were deemed a continuing threat to society even after serving their formal prison sentence. This category was sometimes applied to those labeled as habitual offenders or individuals considered undesirable based on discriminatory Nazi policies. This correction underscores the fluid and often arbitrary nature of Nazi prisoner classifications, which were influenced by ideological and discriminatory motives rather than consistent legal standards.

You can find the records for my other five paternal great-grandparents and great-great-grandparents from the Zigeunerbuch and the Holocaust Survivors and Victims Database in the optional reading section at the end of this post.

My family history underscores the chilling efficiency of the Zigeunerbuch and its role as a precursor to mass genocide. It was not just a tool of surveillance. This wasn’t “data” in the abstract. This was data used as a weapon. It was a mechanism of destruction that facilitated unimaginable atrocities. It was a system that helped decide who would live and who would disappear. Knowing that my ancestors were part of this tragic story reminds me of the stakes involved when discussing the ethical use of data and technology.

As I reflect on how systems like the Zigeunerbuch could re-emerge in today's context, the danger feels immediate and personal. Modern technologies such as AI, sensors, and biotechnology could enable oppression on a scale far greater than what was possible in the early 20th century. These tools, in the wrong hands, have the potential to replicate the injustices that claimed the lives of so many, including my family.

This connection to my family’s history reinforces my commitment to advocating for ethical safeguards and systemic reforms. Preventing misuse of technology matters. So does honoring the people who suffered, and making sure this never happens again. The story of the Zigeunerbuch is a warning, and for me, it’s also a call to action.

My great-grandparents’ and great-great-grandparents’ story is tragically not unique. It represents the silent devastation inflicted on thousands, whose lives and freedoms were shattered by a ledger designed to dehumanize. The sociologically chilling part is how ordinary this story is. That ordinariness is the danger.

Imagine all the people whose names and/or photos appeared in the Zigeunerbuch. One morning, they woke up to find authorities using this ledger to restrict their movements and question their livelihood. Their identity, reduced to a criminal profile through bureaucratic records, became a weapon of systemic persecution.

The persecution and marginalization of the Sinti community created a vicious cycle. Systematic exclusion from education, housing, and legitimate employment opportunities forced some individuals into criminality as a means of survival. Authorities then used that to justify further stigmatization and oppression, fulfilling the very stereotypes they had imposed. Yet, the reality was far less alarming than propaganda suggested. The problem of Sinti crime was exaggerated, perpetuated by authorities and public discourse eager to scapegoat an already marginalized group. Authorities selectively interpreted mobility and poverty as criminality, then used their own records as “proof” - a feedback loop that made prejudice look empirical. For my great-grandparents, my great-great-grandparents, and many others, the system offered no chance for redemption, only relentless surveillance and preordained condemnation.

The establishment of the Zigeunerzentrale was preceded by intense political debates about combating the so-called "Gypsy plague" (a dehumanizing phrase used in historical political debate). Yet, as Angelika Albrecht’s research into the police perception and handling of this issue in Bavaria from 1871 to 1914 reveals, these fears were far from grounded in reality. The idea of an "influx" of Sinti and Roma was largely a fabrication, driven by police reports and crime statistics that painted an exaggerated picture. Even deeply entrenched stereotypes about criminality failed to hold up under scrutiny. For example, the widely held belief, perpetuated even within government circles, that Sinti and Roma frequently committed arson had no basis in fact. In reality, from 1871 to 1914, there was only a single documented case of a Romani individual being tried for arson in all of Bavaria.

Systematic Oppression: How Data Was Weaponized Against Sinti and Roma Communities

Here’s a breakdown of the data that was systematically weaponized against Sinti and Roma communities in ways that escalated their marginalization and oppression over time:

➡️ Criminalization and Stigmatization: Authorities used the Zigeunerbuch to equate Roma and Sinti people with criminals and vagrants. They relied on it to justify surveillance, arrests, and punitive actions, even when no crimes had been committed. The stigmatization of being listed in the Zigeunerbuch alone was enough to warrant suspicion.

➡️ Centralized Monitoring: Authorities across Germany shared and cross-referenced the Zigeunerbuch to track the movements and activities of Roma and Sinti populations. This systematic tracking denied them the ability to freely travel, work, or settle, further isolating them from society.

➡️ Legislative Enforcement: Information from the Zigeunerbuch informed discriminatory laws and policies, such as the requirement for Roma and Sinti individuals to register with local police. These laws curtailed their rights and facilitated targeted interventions, including forced relocations and restricted residence.

➡️ Administrative Efficiency in Oppression: The distribution of the Zigeunerbuch across thousands of authorities streamlined the persecution process. Police and administrative officials could quickly access detailed profiles to identify and act against individuals, making the oppression bureaucratic and systematic.

➡️ Mass Deportations and Genocide: The Zigeunerbuch became an instrumental tool during the Nazi era. The detailed personal data, genealogies, and photographs it contained were used to identify individuals for deportation to concentration camps. During the Holocaust, these records directly contributed to the genocide of the Romani people.

➡️ Post-War Legacy: Shockingly, after the war, some of the records compiled by the Zigeunerzentrale continued to be used by police in Germany. These records perpetuated discrimination against Sinti and Roma communities well into the 20th century, as authorities carried over practices and personnel from the Nazi regime.

This use of data highlights the horrifying efficiency with which seemingly mundane administrative tools can be weaponized for oppression. The Zigeunerbuch was more than a record. It was an enabler of systematic injustice and violence.

The Persistent Legacy of the Zigeunerzentrale: Post-War Surveillance and Systemic Discrimination

However, the Zigeunerzentrale, in both its personnel and operational structure, was reestablished in Munich after World War II in 1946 under the name Zigeunerstelle and continued the surveillance and registration of individuals derogatorily labeled as "Gypsies," including Sinti and Roma communities. Later, it was euphemistically renamed the Landfahrerstelle (loosely translated as Central Office for the Control of Itinerants), a term rooted in Nazi-era language. This new iteration was incorporated into the Bavarian State Criminal Police Office (Bayerisches Landeskriminalamt). Despite Germany’s transition to democracy, the institutional practices of the Zigeunerzentrale persisted, reflecting a disturbing continuity with its past.

Continuity wasn’t abstract. You could see it in categories that survived regime change, in files that stayed active, and in practices defended as routine “policing.”

Bureaucracies are conservative by design. They preserve what they know. Sometimes that means stability. Sometimes it means rot. Files, procedures, and expertise persist even after political legitimacy collapses. What looks like inertia is often institutional self-preservation.

In the 1970s, the Landfahrerstelle was declared unconstitutional and subsequently disbanded. However, the personal files it maintained were handed over to so-called Tsiganologists, researchers who aligned themselves with the legacy of the Nazi-era Racial Hygiene Research Center (Rassenhygienische Forschungsstelle). This perpetuated the misuse of these records under the guise of academic inquiry.

Shockingly, the systemic targeting of Roma and Sinti did not end there. Until at least 1998, police authorities in Bavaria continued to document individuals under the "Sinti and Roma" category, citing "purely professional policing reasons." It was not until October 2001, over a century after the founding of the original Zigeunerzentrale, that the last remaining practice of ethnically profiling Sinti and Roma in Bavarian police records was officially ended, according to available reporting. In bureaucracies, “ended” on paper can still take time to end in practice.

This prolonged legacy of surveillance and discrimination underscores how deeply entrenched prejudices can linger within institutional frameworks, even under ostensibly democratic governance. It serves as a stark reminder of the critical need for vigilance and accountability to prevent the continuation of such practices under new guises.

On December 14, 2021, Dr. Eveline Diener, a Chief Detective, presented research on the Landfahrerzentrale's activities during a press event at the Bavarian State Criminal Police Office (BLKA) in Munich. Her findings highlighted the institution’s continued use of discriminatory practices in the post-war era.

The Landfahrerzentrale's operations remind us of how prejudiced systems can endure even after the fall of oppressive regimes. The lack of precise information on its closure underscores the need for further research and recognition of such institutions to fully understand and address historical injustices.

And the Zigeunerzentrale isn’t the only example of data weaponization in history. IBM’s punch card systems, for instance, played a role in Nazi Germany’s ability to catalog and track Jewish populations with chilling efficiency. Similarly, apartheid-era South Africa used passbooks, paper-based surveillance tools, to restrict movement and enforce racial segregation. These systems, like the Zigeunerzentrale, remind us how bureaucratic tools can quietly become mechanisms of control. And these are just two other examples from a long and troubling history of how bureaucratic tools, under the guise of efficiency, have been weaponized to enforce oppression and control.

What If AI Had Existed Then?

A useful rule of thumb: efficiency becomes oppression when optimization targets people instead of processes, especially when categories are inherited, stigmatizing, or non-consensual. You don’t need a police state to see the pattern. In enterprises, “optimization” can mean risk scores for employees, automated performance flags, or access controls that quietly punish the wrong proxies. The mechanism is the same: a label becomes a destiny.

When I say “AI” here, I mean machine learning systems that classify, rank, and predict from data. I do not mean sentient machines.

Counterfactuals only help if they show you which safeguards fail first. They’re useless if they turn into historical fan fiction.

The Zigeunerbuch was horrifyingly effective for its time - a meticulously constructed weapon of oppression, but constrained by the manual processes of its era.

Futurist Amy Webb identifies these three transformative general-purpose technologies as shaping the future due to their profound and lasting impacts:

🚩 Artificial Intelligence: Like the steam engine or electricity, AI is recognized as a GPT because of its transformative economic and societal potential.

🚩 Advanced Sensor Technology: Sensors embedded in everyday devices collect real-world data, enabling richer models for behavioral predictions and generating proprietary data crucial for AI applications.

🚩 Biotechnology: Advances in synthetic and generative biology address global challenges, from food security to environmental degradation, by creating lab-grown meat and alternatives to traditional materials.

The key insight is their convergence, where breakthroughs in one spur progress in the others, creating a compounding "flywheel effect." This interconnected growth necessitates a shift in how industries and governments approach innovation, particularly in ensuring ethical safeguards.

Now, imagine how the story might have unfolded if today's technologies (Artificial Intelligence (AI), advanced sensors, and biotechnology) had been available to amplify the capabilities of the Zigeunerbuch.

This is a counterfactual stress test, not a claim about any specific company or system today. The point is to highlight which safeguards matter when classification and enforcement can be automated.

The Dark Potential of AI: Reimagining Historical Tools of Oppression

AI could have automated the Zigeunerbuch’s data collection, integrating digital fingerprints, social media activity, and predictive behavior analysis. Algorithms could have processed vast amounts of data in real-time, flagging individuals for persecution with chilling precision. Yes, if AI had existed then, the Zigeunerbuch could have become a real-time eligibility machine, deciding who gets to move, work, reproduce, or exist in public without harassment.

The following technologies differ in form, but not in function: they turn prediction into permission and suspicion into policy.

⚠ Data Collection: AI could have automated the gathering of personal data, integrating digital fingerprints, social media activity, and predictive behavior analysis, creating a far more comprehensive surveillance system.

⚠ Data Analysis: Instead of relying on human clerks, AI algorithms could have processed vast amounts of data in real-time, identifying individuals and communities for persecution with unprecedented speed.

⚠ Facial Recognition: Modern AI tools could have matched photos from the Zigeunerbuch to individuals with chilling accuracy, making it nearly impossible for anyone to evade detection.

⚠ Predictive Policing: AI models could have flagged individuals as "high-risk" based on biased criteria, justifying preemptive arrests and deportations.

⚠ Automated Segregation: Algorithms could have sorted people into categories of "desirability" or "danger," accelerating discriminatory practices.

⚠ Propaganda Amplification: AI-powered content algorithms could have spread hate-filled ideologies more efficiently, inciting public support for oppressive policies.

⚠ Genetic Profiling: Advances in AI-driven genetics could have expanded racial profiling to entire lineages, furthering genocidal objectives.

Advanced Sensors: The Silent Collectors

Modern sensors, embedded in smartphones and urban infrastructure, would have created a constant stream of surveillance. They could have tracked movement, monitored behavior, and even measured physiological responses in real-time. For the Zigeunerbuch’s aims, sensors would have provided an unbroken trail of data to control marginalized groups.

⚠ Behavioral Tracking: Movement patterns could have been monitored to identify "undesirable" activities.

⚠ Surveillance at Scale: Sensors in public spaces could have replaced physical checkpoints, offering an omnipresent system of control.

Biotechnology: A New Frontier for Control

Biotechnology might seem unrelated to historical oppression, but its potential for genetic profiling is deeply concerning. Imagine if the Zigeunerbuch’s genealogical data were augmented with DNA analysis, categorizing populations based on fabricated notions of racial or genetic "purity."

⚠ Genetic Profiling: Entire families could have been targeted through lineage analysis, extending persecution across generations.

⚠ Biological Segregation: Advances in synthetic biology could have justified exclusionary practices under the guise of scientific legitimacy.

The Dual Nature of Technology

These technologies aren’t inherently oppressive. They take the shape of the incentives and institutions that deploy them. While they could amplify harm, they could also uncover injustice. AI might have flagged individuals for persecution, but it could also have revealed systemic discrimination. Sensors could have monitored marginalized communities, but they could also have documented state abuses. Biotechnology could have enabled racial profiling but also addressed global health disparities.

But this raises another question: Could these technologies have been used to dismantle oppressive systems instead? Just as easily as they could amplify harm, their capabilities could also uncover injustice, expose discriminatory patterns, hold institutions accountable, or highlight systemic inequalities. Imagine if predictive analytics had identified patterns of police misconduct, if sensors had recorded systemic abuses, or if biotechnology had been used to address health disparities rather than enforce segregation. AI, sensors, and biotechnology, wielded ethically, could have been powerful tools for accountability and justice, uncovering the abuses of the Zigeunerzentrale rather than enabling them.

The dual nature of AI and the convergence of AI, sensors, and biotechnology highlights an urgent need: not just to regulate how these technologies are used, but to actively design systems that prioritize equity and justice.

Modern Parallels and Ethical Stakes

While the Zigeunerzentrale and Zigeunerbuch are products of the past, their echoes are present today. Surveillance states, biased policing algorithms, and disinformation campaigns all leverage technology to target vulnerable communities. Predictive policing models, for instance, disproportionately flag individuals from minority groups due to biased training data, perpetuating cycles of systemic oppression.

The normalization of AI in systems with inherent biases can do real damage. If unchecked, it risks replicating the same patterns of discrimination that once drove the machinery of the Zigeunerzentrale.

To be clear: these aren’t equivalences of atrocity; they’re equivalences of method:

classification → prediction → action with weak recourse (and at each step, it becomes harder to locate responsibility: “the model said so”, “the dashboard ranked it”, “the policy required it”.)

Different countries, different contexts; same recurring vulnerability: systems that classify people at scale become tempting to use for governance shortcuts, especially under fear or pressure.

The most dangerous part is the feedback loop.

If a model flags a group more often, that group gets policed more. More policing creates more recorded incidents.

The next model trains on that “reality” and calls it objective.

At that point, injustice no longer feels imposed.

It feels statistically confirmed.

Sociologists call this self-validating social control: systems that generate the evidence they later cite as justification.

I’m not listing these to be dramatic. I’m listing them because they show how fast “risk scoring” becomes governance.

☑ Take, for example, the Correctional Offender Management Profiling for Alternative Sanctions (COMPAS) algorithm used in the U.S. criminal justice system. Designed to predict recidivism, it has been heavily criticized for racial bias, disproportionately flagging Black individuals as high-risk and labeling Black defendants as more likely to reoffend compared to white defendants, largely due to biased data.

☑ Or consider Clearview AI, a facial recognition tool criticized for privacy violations and its use by authoritarian regimes to track dissenters.

☑ Reporting and human rights investigations have documented AI-enabled surveillance and policing in Xinjiang that targets Uyghurs and other Muslim minorities, raising serious human rights concerns.

☑ And in India, the Aadhaar biometric ID system, while designed for efficiency and inclusion, has raised concerns about surveillance and data security.

These examples aren’t just echoes of the past. They’re active warnings about how technology can be weaponized in ways that feel eerily familiar. They also demonstrate that while technology evolves, the dangers of its misuse remain constant, underscoring the need for vigilance.

The Modern Threat of Neofascism and the Return of the Zigeunerbuch

Quick note: I’m not making a partisan argument here. I’m pointing at a pattern that repeats across countries and decades: economic insecurity, scapegoating, and scalable surveillance. When those conditions align, identity-based targeting becomes easier to justify and easier to automate.

I’m not predicting a specific regime. I’m naming the conditions that make bureaucratic targeting politically saleable.

This is about what happens when fear seeks administrative shortcuts.

The Zigeunerbuch was a tool of systemic oppression, built on the scapegoating of marginalized communities. While it may feel like a relic of history, its spirit could easily resurface in today’s climate, fueled not only by the misuse of modern technologies like AI, sensors, and biotechnology but also by the growing shadow of neofascism and other authoritarian movements. The same dynamics that enabled the Zigeunerbuch - fear, division, and a hunger for control - are all too present in a world where inequality is widening and societal precarity is the norm.

As economic insecurity rises, people search for explanations that feel actionable.

Authoritarian movements succeed not because they persuade, but because they simplify causality, turning messy, structural failure into a short list of “enemies”. They are against the systems that have failed them: systems that keep them poor, oppressed, and anxious about the future. This frustration and resentment are fertile ground for leaders who redirect the anger away from systemic issues and toward convenient scapegoats. Immigrants, minorities, and marginalized groups become easy targets, painted as the cause of economic stagnation and societal decline.

Neofascism offers a dangerous mix of economic protectionism and anti-immigrant rhetoric. It promises simple solutions - redistribution here, protectionism there - but these solutions often come wrapped in the language of exclusion and division. The policies, whether subtle or overt, are designed to "protect" one group at the expense of another, perpetuating cycles of discrimination and violence.

This is why the rise of neofascism today is so dangerous: it thrives in moments of collective frustration and loss of direction. In her video "Basic Income vs. Gaslighting the Precariat," Dr. Laurie Johnson highlights how the precariat (a growing class of economically insecure individuals) is particularly vulnerable to this manipulation. This group of people, described by Guy Standing as a new and growing dangerous class, is a perfect example. This group of economically insecure individuals has immense potential power, but the question remains: How will that power be channeled? History shows that in the absence of meaningful change, it often gravitates toward divisive ideologies that promise to make the world simpler by identifying enemies and eliminating them.

The Zigeunerbuch is a haunting reminder of how quickly these ideologies can evolve into bureaucratic tools of oppression. In an era where modern technologies can collect, categorize, and act on personal data with unprecedented efficiency, the potential for harm is magnified. Combine these capabilities with the current rise of neofascism, and the possibility of history repeating itself is chillingly real.

If we don’t address the structural inequalities driving people toward these movements - if we don’t provide real solutions that address their precarity - we risk creating the conditions for another Zigeunerbuch. Not as a ledger in a dusty archive, but as a real-time database powered by AI, sensors, and the complicity of a society willing to trade justice for the illusion of order.

📌 This article focuses on what individuals, companies, and policymakers can do to address the ethical risks posed by technologies like AI, sensors, and biotechnology. Tackling these issues is critical, but it is only part of the equation. The broader economic forces (such as inequality, precarity, and the rise of neofascism) that drive societal division and vulnerability to technological misuse represent a separate, equally complex challenge. Addressing these economic dimensions requires systemic reform and is beyond the immediate scope of this discussion. Please note that the action items in the next two chapters are focused specifically on mitigating the ethical risks associated with AI, sensors, and biotechnology. These recommendations are aimed at preventing the misuse of these transformative tools, rather than addressing the larger systemic issues mentioned above.

What Must Be Done to Prevent History from Repeating?

A simple philosophical rule applies: any system that classifies human beings must preserve the possibility of moral interruption.

Safeguards fail when they are procedural but not political.

Transparency without power to contest is documentation, not accountability.

The story of the Zigeunerzentrale is a cautionary tale, but it also offers lessons for the ethical development of AI, sensors, and biotechnology.

Here are the safeguards we must prioritize:

1️⃣ Data Ethics: Personal data must be protected from misuse across all three technologies.

✅ Explicitly limit the ability of AI and sensors to process sensitive attributes like race, ethnicity, or genetic information.

✅ Enforce data minimization principles, ensuring only strictly necessary data is collected and retained.

✅ Establish clear guidelines for how biometric, sensor, and genetic data are handled, with strong privacy protections.

2️⃣ Transparency and Accountability: Systems must be explainable, auditable, and held to rigorous accountability standards. If people can’t contest a label, “transparency” is just a PDF.

✅ Ensure that AI algorithms, sensor networks, and biotech systems are transparent in their design and decision-making processes.

✅ Mandate disclosure of algorithmic logic, data sources, and underlying assumptions, particularly in law enforcement, public health, or governance applications.

✅ Include third-party audits and certifications for ethical compliance across all three fields.

✅ Right to contest classification: If a system labels you, you must have a clear path to see the basis, challenge it, and correct records, with consequences for agencies that refuse.

3️⃣ Bias Mitigation: Rigorous testing and oversight are essential to eliminate biases in training data and system outputs.

✅ Mandate that training datasets for AI, sensor-based systems, and biotech applications are free from historical and systemic biases.

✅ Incorporate bias detection tools and continuous testing to ensure fairness in system outcomes.

4️⃣ Historical Awareness: Developers and policymakers must understand how technology has been weaponized in the past to prevent similar abuses.

✅ Require training for AI, sensor, and biotech professionals on historical misuses of technology and their impacts on marginalized communities.

✅ Involve ethicists, historians, and sociologists as advisory panelists in the design and deployment of these technologies.

5️⃣ Robust Legal Frameworks: Strong laws must ensure ethical compliance and penalize misuse.

✅ Enact comprehensive regulations to govern the use of AI, sensor technology, and biotech, holding developers, users, and institutions accountable.

✅ Prohibit applications that exploit biometric or genetic data for discrimination or profiling.

6️⃣ Human Oversight: Critical decisions should never be left solely to autonomous systems.

✅ Prohibit fully autonomous decision-making in sensitive areas like policing, governance, and healthcare.

✅ Require human intervention and review for all high-stakes decisions influenced by AI, sensors, or biotechnology.

✅ A system isn’t safer because a human clicks “approve.” It’s safer when humans have time, authority, and incentives to disagree with the model.

7️⃣ Content Moderation and Disinformation Prevention: AI-driven algorithms and sensor-enabled data streams can amplify harmful ideologies if unchecked.

✅ Mandate that platforms using AI or sensor data for content dissemination implement anti-disinformation measures.

✅ Impose penalties for amplifying biased or harmful narratives through algorithmic or sensor-driven processes.

8️⃣ Ethical Use of Biotechnology: Genetic and synthetic biology applications must prioritize equity and justice.

✅ Outlaw the use of biotechnology for racial or genetic profiling.

✅ Focus biotech efforts on addressing global challenges, such as food security and health disparities, while avoiding applications that could reinforce systemic inequalities.

Never Again: Turning Lessons of the Past into Action for an Ethical AI Future

The lessons of the Zigeunerzentrale are not just historical footnotes; they are warnings written into the record, and into the bodies of the people who paid for it, urging us to safeguard the present and future.

The Zigeunerbuch is a grim reminder of how data and technology can be weaponized for unimaginable harm.

While nothing can surpass the horrors of the Holocaust, the thought of AI amplifying such systems adds another layer of chilling potential. The devastation could have been even more widespread and efficient, but it could never be "worse" than the unthinkable atrocities already committed.

Today, we hold the responsibility to ensure that technology is never again used to enable such acts of cruelty.

Here’s what individuals, companies, and policymakers can do today:

✅ Raise Awareness: Share stories like Anna's to highlight the human cost of biased systems. Engage with and amplify voices from impacted communities to ensure their stories shape future technology.

✅ Advocate for Transparent Policies/Legislation: Push for laws requiring transparency in AI algorithms, explainable AI and robust third-party audits. Join movements demanding transparency in AI systems. Support organizations focused on ethical AI development.

✅ Support Open-Source Ethical AI: Promote tools and frameworks designed to ensure fairness and accountability.

✅ Educate Yourself and Others: Learn about the history of systemic oppression and its modern parallels. Understand how AI systems work and actively question their impacts, especially in marginalized communities. Push for historical education as part of AI ethics training, ensuring decision-makers understand past mistakes.

✅ Call for Accountability: Demand that companies disclose how their AI tools are trained and used. Call on companies to release transparency reports and commit to ethical AI practices.

These steps are small, but collectively, they create pressure for change.

Conclusion: Building Tools for Liberation, Not Oppression

Technology is neither good nor evil. It is a force multiplier for whatever incentives and categories we embed in it.

As we advance, we must ask ourselves:

Are we building tools for liberation or oppression?

Are we creating the next Zigeunerzentrale?

The tools we build shape the rules we end up living under. If we get the safeguards wrong, we won’t notice at first. That’s the problem. Let us ensure they serve humanity, fostering equality and justice rather than harm.

If you only demand one safeguard: the right to see and contest the data and logic used to classify you. Without that, every other promise is optional.

A society that cannot question its classifications eventually stops recognizing its own cruelty. And by the time cruelty feels normal, it no longer needs justification.

Final Reflection: Why the Zigeunerbuch Matters

The Zigeunerbuch is more than a historical artifact. It is a symbol of how centralized data can become a tool of dehumanization.

By understanding its role in history and imagining the impact of modern AI in similar hands, we can commit ourselves to creating ethical safeguards that prevent such atrocities in the future.

⚠ History gives us the warnings. What we do with them is up to us.

Optional Reading

Here are the records for all my six paternal great-grandparents and great-great-grandparents from the Zigeunerbuch and the Holocaust Survivors and Victims Database.

Sources and Links

👉 Miscellaneous Links:

🔗 Reichszentrale zur Bekämpfung des Zigeunerunwesens – Wikipedia

🔗 Zigeuner Buch : Dillman, Alfred

🔗 Basic Income vs. Gaslighting the Precariat

🔗 Rezension zu: A. Albrecht: Zigeuner in Altbayern 1871-1914

👉 My Family’s Concentration Camp Records:

🔗 My great-grandfather Johann Winter’s Auschwitz record

🔗 My great-grandmother Philippine Winter’s (née Köhler) Auschwitz record

🔗 My great-grandfather’s brother Johann Evangelist Winter’s Mauthausen record

👉 IBM:

🔗 IBM and the Holocaust - Wikipedia

🔗 How IBM Helped The Nazis Carry Out The Holocaust

👉 South Africa:

🔗 Pass law | Definition, South Africa, & Apartheid | Britannica

🔗 Apartheid Era Pass Laws of South Africa

🔗 Apartheid In South Africa: Why It Was Implemented And Key Historical Facts

👉 COMPAS:

🔗 How We Analyzed the COMPAS Recidivism Algorithm — ProPublica

🔗 Can the criminal justice system's artificial intelligence ever be truly fair?

🔗 Algorithmic Bias in Recidivism Prediction: A Causal Perspective

👉 Clearview AI:

🔗 How facial-recognition app poses threat to privacy, civil liberties — Harvard Gazette

🔗 Facial recognition startup Clearview AI settles privacy suit | AP News

🔗 AGs from 22 states and DC oppose Clearview’s biometric data privacy settlement | Biometric Update

👉 China:

🔗 How China’s Government Is Using AI on Its Uighur Muslim Population | FRONTLINE

🔗 China: Unrelenting Crimes Against Humanity Targeting Uyghurs | Human Rights Watch

🔗 China’s Algorithms of Repression: Reverse Engineering a Xinjiang Police Mass Surveillance App | HRW

🔗 China’s high-tech surveillance drives oppression of Uyghurs - Bulletin of the Atomic Scientists

🔗 US sanctions Chinese firms over alleged repression of Uighurs | Human Rights | Al Jazeera

👉 Aadhaar:

🔗 Right to Privacy and Aadhaar – LAW Notes

🔗 The Right to Privacy and the Aadhaar Verdict | LawFoyer

🔗 Data Privacy n The Context Of Aadhaar And India's Digital Identity Systems

🔗 Aadhaar Card Security and Privacy Concerns

If this resonated with you, consider subscribing to receive more insights like these straight to your inbox. Together, we can continue the conversation and shape the future on our own terms.

The views expressed here are entirely my own. This newsletter is not affiliated with any organization, and no confidential or non-public information is shared.