Can AI Ever Develop Instincts?

Why Pre-Thought Intelligence Might Be the One Thing Machines Will Never Truly Have

👉 What do you think: can machines ever develop something like instinct or is instinct still a human thing? I’d love to hear what you think as you go. ⬇

We tend to equate intelligence with thinking: cold, calculated reasoning, like a spreadsheet or a courtroom transcript. And to be fair, that’s the kind of intelligence current AI is already pretty good at. Give it a logic problem, a pattern, a thousand gigabytes of data and it’ll crunch its way to a strong answer.

But human intelligence doesn’t always work like that. Some of our best, life-saving, and creative decisions happen before we’ve had time to think.

A mother doesn’t run the numbers before yanking her child out of danger.

A soccer player doesn’t do geometry in their head before curving a shot into the top corner.

A firefighter doesn’t stop to consult a checklist the moment before charging into a burning building.

They know. They feel it. Then they move.

And even that “just knowing” isn’t evenly distributed. It’s shaped by training, repetition, and the resources people can access. Which means instinct is also shaped by institutions and inequality, not just biology.

I once watched a toddler stumble near a staircase at a friend’s place. Before anyone could react, the mother lunged. No calculation, no pause. She didn’t think. She just acted. That’s instinct.

These moments of instinct aren’t separate from intelligence. They’re one of its oldest and most fragile expressions. They’re part of the blueprint. They’re what we call instinct: when your body moves before your mind catches up.

This is the dimension current AI systems don’t have, at least not in the human sense.

By instinct here, I don’t mean speed alone, or pattern-matching on autopilot. I mean a form of intelligence shaped by survival. It’s tied to a body, loaded with priorities, and oriented toward staying alive and connected in a messy world. Instinct is not just what we do quickly; it’s what we are prepared to do before we have time to think.

And some of what we call “instinct” is social, not just biological. We learn the world so deeply, through family, class, culture, and routine, that it starts to feel like nature. What looks like “pure instinct” is often social training that your brain now runs automatically.

In plain terms, instinct sits somewhere between reflex and reason. Not blind reaction, but not deliberation either. It is a form of a kind of snap judgment: action guided by a lived sense of what matters before you start talking yourself through it.

That’s not to say human instincts are always correct. They’re often riddled with bias, as plenty of research shows. But they represent a deeply embedded, evolution-shaped way of reacting that current AI still doesn’t reproduce in structure or in stakes.

The Problem with AI’s "Smartness"

AI dazzles. It can outplay us in chess, write a passable poem, and spot patterns in medical images that humans can miss. But all of that is based on processing after the fact. It processes data, spots patterns, and produces an output.

Organizational researchers have long noted that measurement systems can shape what organizations treat as competence. AI can intensify that dynamic by making some kinds of work easier to count than others.

Instinct is different. It’s built in. Evolution baked it in. Instinct isn’t just fast. Instinct is meaningful. It’s a set of mental shortcuts shaped over time to help us survive before we have time to think. It comes from a body shaped by a long history of learning what matters and what doesn’t.

Babies know how to suckle.

We flinch before a baseball hits us.

We fear snakes and heights, not spreadsheets and software glitches.

AI doesn’t have a biological stake in outcomes the way we do. It has no lived history, no inherited stakes, and no instinct in the human sense. Its “priorities” are assigned by design, not felt.

AI can simulate some reflexes through reinforcement learning or behavioral heuristics. But these are surface-level imitations and not naturally developed abilities born from embodied, biological survival needs.

Yes, some argue that training AI over billions of data points is its own kind of evolution. But it’s guided optimization. Not the blind, survival-driven, time-tested pressure that shaped us. AI optimizes toward goals we set; we adapted because we had to survive.

Can We Train AI to Fake It?

Some argue that instincts are just compressed experience. And if AI sees enough data, it’ll get there too. And to be fair, it can be impressive:

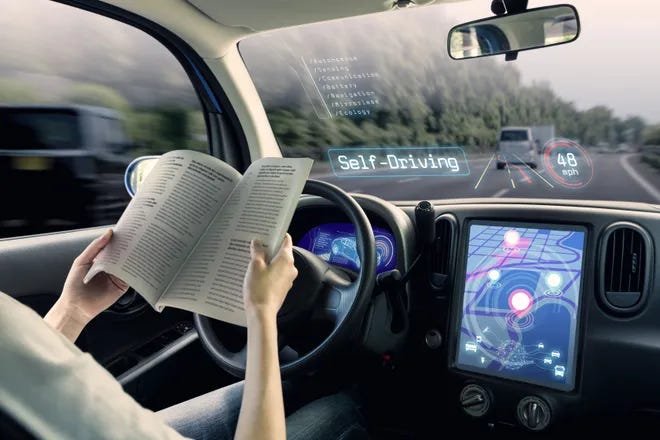

Self-driving cars can now anticipate erratic lane changes before a human expects them.

Diagnostic algorithms can spot disease signals easy for humans to miss.

Trading bots can react to financial tremors faster than any human can react.

There’s a serious counter-argument. Some researchers argue that instinct is simply compressed experience: that if an agent is exposed to enough environments, feedback loops, and physical interaction, instinctive behavior could emerge. On this view, reinforcement learning plus embodiment is not imitation, but the early stages of something new.

But current AI reacts to patterns more than meaning. Meaning, for humans, is tied to what’s at stake for us. A firefighter senses a structure’s collapse with their body: the creak, the smell, the heat. AI waits for sensor input. A pilot feels turbulence in their bones before the instruments catch up. AI doesn’t feel anything. It waits for a reading.

Even advanced AI models that combine sensing and reasoning still lack biological grounding. These models process inputs; humans experience them. Researchers in embodied AI and robotics believe that meaning can emerge through physical interaction with the world. And they have a point. But evolution didn’t just give us a body. It gave us a history. One that shaped values, pain responses, attachment, and survival strategies. That depth isn’t a feature you can just add; it emerges only under conditions of vulnerability, dependence, and consequences you can’t undo.

Even at its fastest, AI isn’t instinctive. It’s quick calculation.

The Missing Ingredient: Biological Priorities

Human instincts aren’t just quick reflexes. They are soaked in meaning. Shaped by the evolutionary forces that made us care about survival, belonging, love, safety, and curiosity.

We intuitively seek connection.

We leap into action for our children.

We can sense betrayal in a glance and danger in a silence.

AI doesn’t have that kind of compass. It isn’t driven by survival. It doesn’t value food over pixels, family over files, freedom over function. It does what we set it up to do, within the bounds of the data it’s been given. Without data, it can’t act. And survival isn’t part of the equation for it anyway.

And no, that’s not a jab at AI’s lack of empathy. It’s a reminder that machine goals are artifacts of human design, not emergent biological impulses.

And “human design” is rarely neutral. In many organizational contexts, the goals embedded in systems often reflect the priorities of the people who choose and deploy them. It’s a pattern well documented in studies of technology and institutions. AI doesn’t need instincts to shape a society. It just needs to be plugged into systems that already have unequal incentives.

Many of our instincts are not about individual survival at all, but about coordination: knowing when to speak, when to yield, when to trust, when to doubt. These social instincts didn’t come from data optimization, but from living inside fragile groups where misreading the room could mean exclusion.

And sometimes instinct isn’t even personal. It’s collective. Crowds move, workplaces align, and families respond to crisis with roles that appear instantly. A lot of human “instinct” is shared scripts: norms we didn’t invent but learned, and that we act out without thinking.

The Real Edge: Instinct Makes Humans Thrive in Chaos

Put plainly: AI tends to win in structured, rule-based environments. Think chess, calculus, or customer support queues. But drop it into the real world, where ambiguity reigns, rules dissolve - and it struggles.

AI struggles not with ambiguity itself, but with ambiguity you can’t fully write down in advance, especially when values conflict and consequences unfold in real time.

Humans can adapt in chaos, but not evenly. Our ability to improvise depends on experience, social support, and whether failure is survivable.

We improvise.

We decide with incomplete information.

We adapt on the fly.

That’s not a bug. That’s a core feature of human cognition.

And it’s driven by instinct.

We make gut calls in emergencies. We trust hunches in creativity. We sense what’s right and sometimes that pre-thought knowing turns out to be more accurate than our careful calculations.

Intuition can mislead us, too. That’s why the goal isn’t to dismiss AI or place instinct on a pedestal. It’s to understand where each tool excels and design accordingly.

The Path Ahead

This isn’t a competition. It’s about recognizing where each shines.

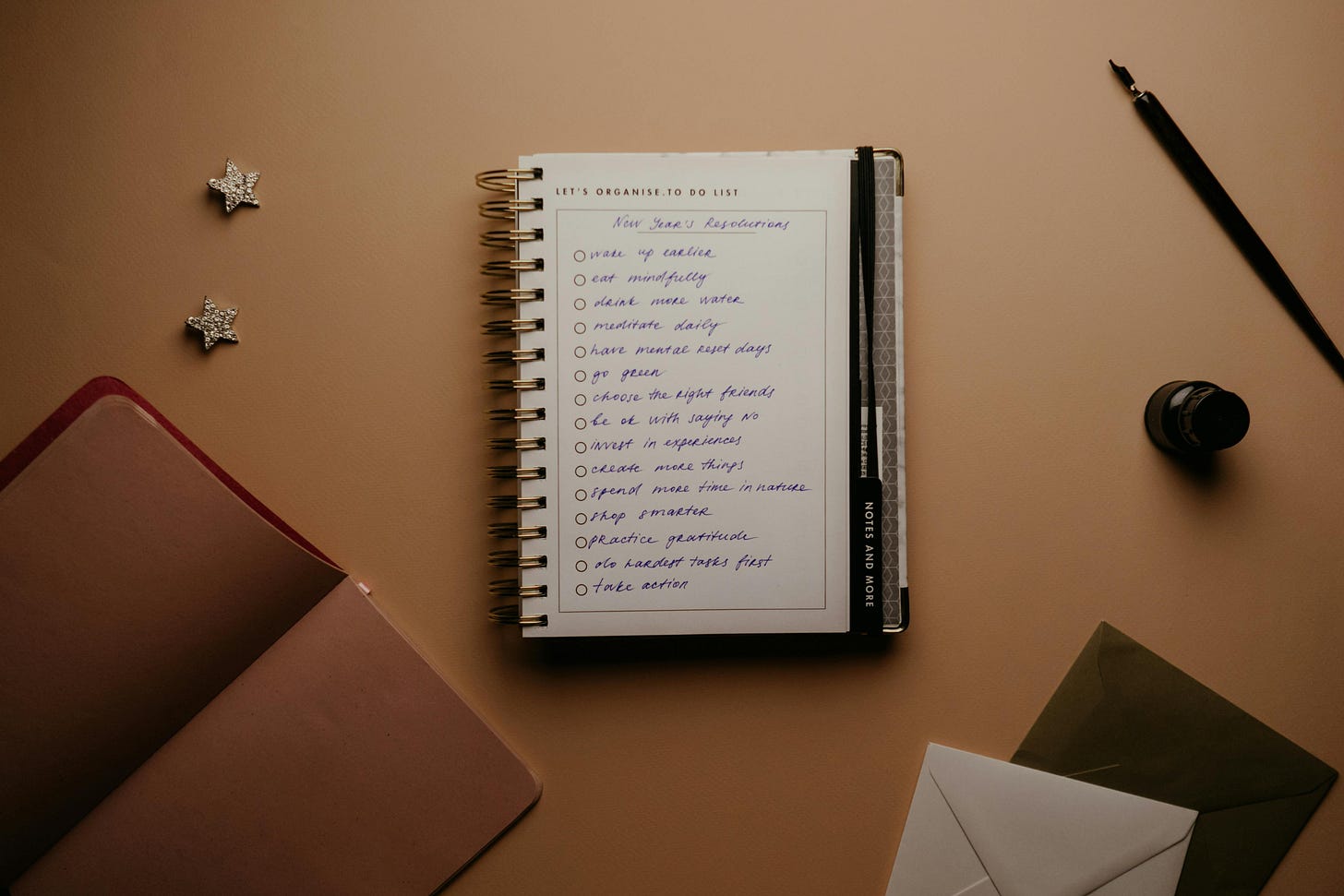

Once you start thinking about it this way, a few guidelines almost write themselves:

Let AI handle the heavy data work. Let it handle logistics, spreadsheets, and predictions. That’s what it’s best at.

Let humans handle uncertainty. Ethics. Crisis response. Strategy. AI has no gut. You do.

Build AI that respects human instinct. Instead of overwriting what evolution built, design tools that amplify our intuition.

Design for real workplaces, not ideal users. Assume time pressure, incentives, status dynamics, and fear of being wrong. If your system only works when people have time to be thoughtful, it won’t work where it matters.

Don’t assume speed equals wisdom. AI may be faster, but fast isn’t the same as wise. Knowing when not to act is often the most intelligent thing a human can do.

Wisdom isn’t in how fast we choose. It’s in what we prioritize. And our priorities are rooted not in data, but in experience, context, and care.

The Wisdom Before Thought

People often say AI is starting to think like us.

But maybe it’s not catching up. Maybe it’s just diverging. Getting better at a totally different kind of intelligence. A type of intelligence that reacts to patterns only after the data shows up.

It’s useful. Absolutely.

Some philosophers and cognitive scientists won’t buy this line. They will argue that meaning itself could emerge from sufficiently complex systems. I’m taking a narrower view here. Meaning without vulnerability is, at best, imitation. It isn’t lived orientation.

It isn’t human-like in the way we usually mean when we talk about instinct.

Real intelligence, the kind rooted in care and survival, rarely begins with a thought. It starts with a feeling. A reflex. A knowing. It starts with instinct.

Whether we call it instinct (biologically hardwired) or intuition (patterned, experiential knowing), both bypass deliberate reasoning. And both resist being reduced to statistical inference alone.

And that, even if we feed current systems far more data, is something AI won’t acquire unless it changes so radically that it stops being the thing we currently call AI.

Because the smartest things we do, we don’t think about them. We just do them. And that’s what keeps us human. That’s what I tell myself when I act before I’ve fully thought it through. And it helps. Most days.

Takeaways

AI is advancing fast, but there are some things it still can’t feel, sense, or know in the human way we do. Here’s what to remember:

AI excels at structured, data-heavy environments. But instinct (fast, meaningful, built-in intelligence) gives humans the edge in chaos.

Instinct isn’t just reaction time. It’s pre-thought knowing, shaped by evolution, soaked in meaning, and hard to replicate with code alone.

We should stop trying to make AI more human and instead, focus on building partnerships that amplify the best of both.

The future isn’t about choosing between humans and machines. It’s about recognizing the unique genius of each and knowing when to trust the data, and when to trust your gut.

Mistaking AI’s speed or pattern recognition for instinct can lead to misplaced trust, which gets dangerous in domains where lives, ethics, or judgment are on the line.

In the end, instinct isn’t a bug in the human system. It’s one of our most powerful features. Let’s not design it out.

👉 Do you agree that instinct gives humans the edge, or do you see it differently? Jump into the comments and let’s continue the conversation. ⬇

If this resonated with you, consider subscribing to receive more insights like these straight to your inbox. Together, we can continue the conversation and shape the future on our own terms.

The views expressed here are entirely my own. This newsletter is not affiliated with any organization, and no confidential or non-public information is shared.